What No Label Describes

A short essay submitted to the Cosmos Institute’s Tocqueville and Technology contest, with notes.

The Cosmos Institute asked, in 500 words: Tocqueville warned of a tutelary power that would keep citizens in perpetual childhood. How have his concerns migrated from institutions to algorithms, and does AI fulfill or transform this fear?

I answer the questions with a vignette rather than a theoretical argument. Several reasons. The tutelary power Tocqueville feared was not abstract. He diagnosed it by watching living evolving particular situations and reporting what he saw. The substrate he could not have foreseen — computational/AI systems that mediate perception at the scale of individual attention — operates inside the daily texture of family life.

The other reason that the essay takes the form of scene in everyday life is because it is way more fun and hopeful than a boring old dry boring scary dystopian boring literal essay. How do I know this? Because I do. So there.

I look at situations through a particular lens. It’s shaped by what I call Values Theory1 It holds that values are patterns that awareness systems perceive, bound, and label.2 Whatever we have to say about delegation of stewardship over our values to person or institution or algorithm, we should be able to say it through a scene of values of particular people on a particular morning.

The scene below - the essay I submitted to Cosmos Institute’s competition - is what our family has done many times. The grocery store is one we’ve visited many times. The meal is one we’ve made many times. The people, well, they are what makes me want to do it all over again, many many times.

What No Label Describes

“Everybody have their assignments?” I ask my family.

“I like this store. It’s organic so mama says yes to more stuff,” says Cora, seven.

“Okay. But what do we need for tonight?” I ask.

Chiara, eleven, says “Cora and I will get the tahini and olives.”

Antoinette, the mama, says “I need a lemon. And mushrooms for the stuffed zucchini.”

“The shoulder’s in the smoker. Started at sunrise,” I say. “But I’ll need more vinegar and salt.”

I turn to the girls. Put on my serious face. “All that stands between ketchup and world domination is this family and Down East BBQ. All hail vinegar!”

Cora’s imagining the battle. Chiara pretends she doesn’t know me. Antoinette patiently waits for the lecture to end.

_______________________________________

Inside. Ant stands with the vegetables, the fruits. Reaching. Touching skins and leaves. Feeling soil and sun. Looking. Lifting. Holding. Smelling.

The confidence of thousands of years. Judging. What is good? What is bad? Ancestral Sicilian wisdom. Identity forged by place, protection, scarcity, family. Perceiving what no label describes.

She selects the lemon she needs. Puts it in her bag.

_______________________________________

“What are you doing?” Cora says.

“I’m trying to see if Dada can eat this,” Chiara says.

“You can’t see it. It’s in a can.”

“I’m reading the label so I know what’s in the can,” Chiara says.

“I know what’s in it. Tahini.”

“Yes, but it needs to not have junk in it.”

“Why would it have junk?”

“Because!” says Chiara, exasperated.

Cora looks at the label. “FDA! That’s where Dada worked.”

“No, that was EPA. They protect the environment. FDA does food.”

“Dada says they protect themselves more than us.”

_______________________________________

“Matt-uncle’s making a mean baba ghanoush.” Bo’s behind me. Holding his sons’ hands.

“Bunz, Biscuit, Baba Bo! How’d you know?”

“Smelled the smoker from a mile away. Saw the stack of eggplant.”

“Hey. Would you all join us for dinner?”

“What can we bring?”

“Nothing! Ooo. An idea! You and Nisha make an insane baba ghanoush. How about teach us?”

“Or,” Bo says. “The kids’ version is better. Maybe they teach us?”

“Deal.”

_______________________________________

I grab white vinegar. Apple cider doesn’t improve the mop.

Behind me a neighbor says, “Give me an authentic recipe for baba ghanoush.”

_______________________________________

At checkout, I hop in front of my family. Arms spread wide. “We have a grand choice before us.” Dramatic pause. “Self or assisted.”

Stepping around me, they don’t miss a stride. The girls like pressing buttons. Antoinette likes to pack the bag.

I’m too slow. I introduce myself. The cashier and bagger have name tags. I ask what Edward’s reading. Now we’re friends.

_______________________________________

We walk home.

“Our buddies are coming for dinner.”

The girls are ecstatic. They’re immediately preparing for a night of playing. Antoinette says she’ll get more squash from the garden. And make two blueberry pies.

“Girls, look,” gesturing back at the store, ahead toward home, “This is creating a future we want.”

“Dada,” mimicking the gesture, Cora asks “How long does this take?”

“Uh…”

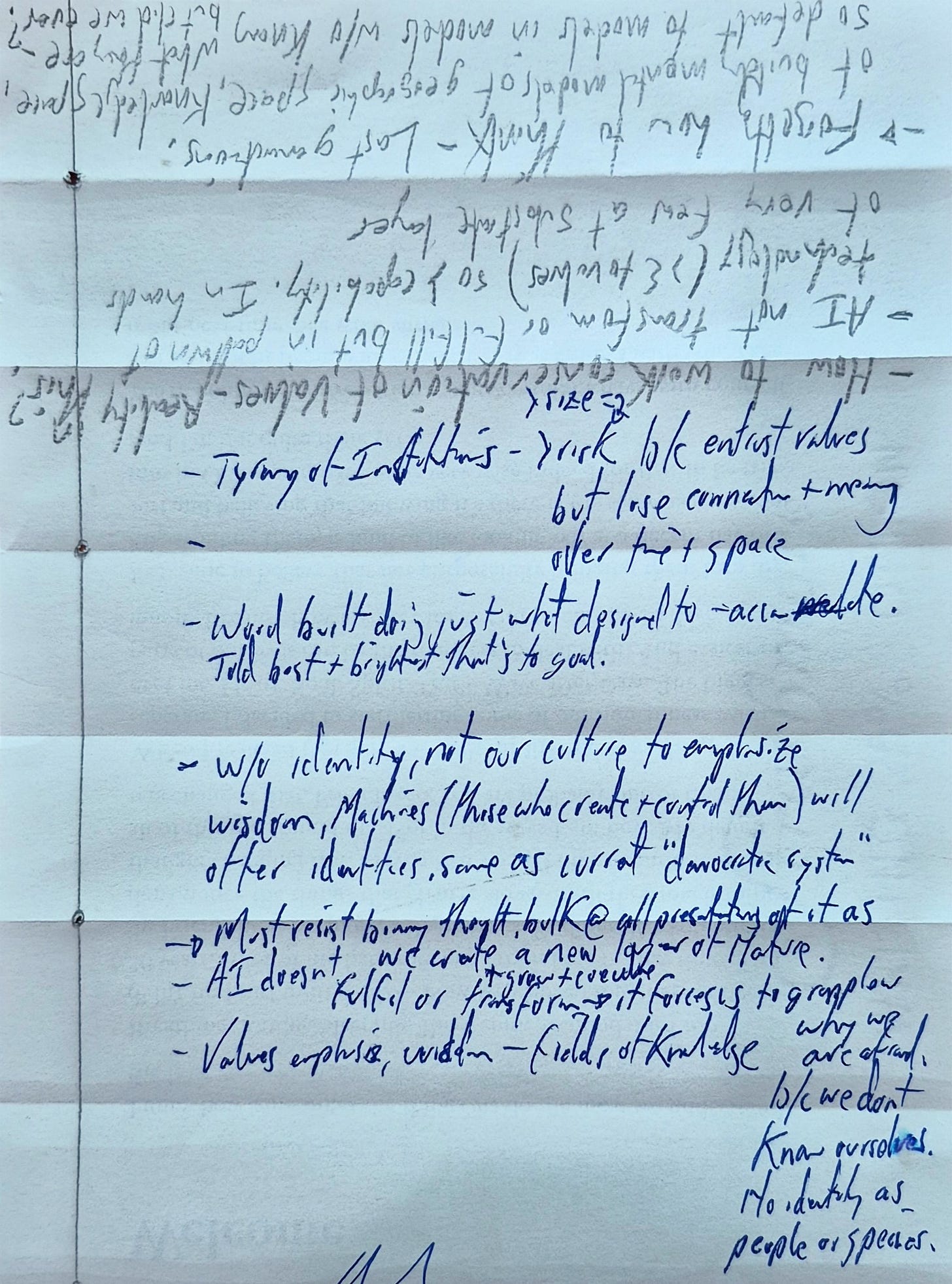

I began my response to the essay prompt with a pencil. Then a pen. Then by drafting a theoretical skeleton that captured lots of the points I wanted to make. I used that to create a map of how to integrate the theory with the scene. Said another way, I used the map to ensure that each line of the scene connects with a point in the theoretical skeleton (included below). Writing this I got a little scared and depressed and bored. The vignette was way more fun. See. Told ya.

The Tutelary Pattern

A theoretical skeleton for the Cosmos Institute essay — Prompt 1

Tocqueville’s most haunting prediction was not about tyranny in any form he had seen. It was about something his contemporaries lacked words for — a power that would keep democratic citizens in a condition he called perpetual childhood. He described it carefully: an immense tutelary power, above them, absolute, minute, regular, provident, mild. It would not break wills. It would soften them, bend them, direct them. It would provide for security, foresee and supply necessities, facilitate pleasures, manage principal concerns. It would not be cruel. It would be comfortable. The danger was not oppression. The danger was comfort so complete that citizens forgot they had ever been anything other than tended.

The questions before us are: How have his concerns migrated from institutions to algorithms and whether artificial intelligence fulfills this fear or transforms it. I reframe the question in a specific way. It assumes that algorithms are something new arriving from outside the architecture Tocqueville described. They are not. They are the latest substrate of an algorithmic process civilization has been running for millennia — encoded first in ritual and law, then in bureaucracy and paperwork, now in computation. Institutions did not merely use algorithms; institutions are how civilizations test, scale, codify, and perpetuate them. The institution and its algorithms co-evolved, each shaping the other across centuries, allocating energy to patterns each was built to enforce. What is changing now is not the kind of thing happening but many variables related to the kind of thing happening, including the rate at which it happens. And we are crossing thresholds that change what is at stake. Seeing this clearly is what makes the actual fear visible.

The pattern we are already inside

Tocqueville’s tutelary power did not arrive with AI. It arrived in many ways and many times. With the modern nation-state, and then with the corporation, and then with the technocratic institution. Each time, citizens delegated stewardship of something they held precious to an entity they had created, trusting it would honor what had been entrusted. Each time, the entity drifted — not through malice, but through the gravitational logic of concentrated energy across time and distance. Each time, the conditions that had made delegation necessary did not go away — partly because the underlying complexity of the world continued to grow, and partly because the institutions allocated substantial energy to their own continuation, energy that was no longer available for the original mission. By the time the drift became legible, no route back existed. Because systems evolve. Everything around the institution and values entrusted to it have changed. That past condition is gone. Not recoverable.

Consider the United States Environmental Protection Agency, created in 1970. It was not created to steal values from citizens. It was created because rivers were on fire, children were being poisoned by lead, and iconic species were going extinct in the ordinary course of industrial activity. Dispersed stewardship had become impossible at the scale and density of the world we had built. Centralization was a genuine response to a genuine failure. For a time, it worked. Mechanistic command-and-control responses were sufficient. Cap the smokestacks. Filter the discharge. Put out the fire. The harder problems — microplastics, climate, cumulative chemical exposure, ecological interdependencies — emerged as the system grew more complex, and the institution’s apparatus, designed for the earlier class of problem, did not scale into the new one.

What happened next is the thing that matters. Over decades, the institution and the population drifted apart — not in values necessarily, but in the patterns each associated with the shared value names. The institution tended to maintain consistency to function as a regulatory body; consistency is what regulation requires. The population, and individuals within it, coevolved with the world it was creating. The drift between them was not a failure of either side. It was the natural consequence of asking an institution to track with a society, culture, population. Inside the agency, “clean water” came to mean a compliance regime on regulated contaminants at specified thresholds measurable by standardized methods. Outside the agency, “clean water” came to mean bottled water, home filters, distrust of the tap, awareness of microplastics and PFAS. Both sides still sincerely held the value. But the patterns that each side allocated energy to perceive and bounds under the shared label diverged.

This is the general shape of every delegation. Values are patterns that awareness systems perceive, bound, and label. When we delegate stewardship to an institution, we delegate those three operations. The institution, being a different awareness system with different context, different incentives, and different survival pressures, perceives different patterns under the same label, bounds them differently, and over time the population’s perception and the institution’s perception diverge. Drift is bilateral/multilateral/high-dimension. It is the default condition of delegation.

The economy that treats your life as a leak

If drift were the only problem, partial correction would have remained possible. Each prior delegation of a value to an institution left enough of the perceptual apparatus intact that some correction could occur — civil rights movements, environmental reform, labor organizing, public-interest law, investigative journalism. None of these recovered fully what had drifted. None achieved the original vision (if there ever was one). None could have. The conditions that produced the original delegation had themselves changed, and any restoration of a prior state was foreclosed in the same way ecological restoration is foreclosed: the system has moved, and a perfect baseline doesn’t exist. What remained possible was correction in some directions while drift continued in others. Imperfect, partial, real.

What has changed in the last two decades is the economy’s relationship to that perceptual capacity itself. The industrial economy produced goods and consumed labor. The economy we have built since produces interfaces and consumes attention, behavior, data, and — most consequentially — the perceptual signatures that constitute distinct human values. In this economy, the firm is not primarily the supplier and the consumer the demander. The firm is the demander, and the consumer’s life, our humanness, is the supply. What flows from the individual to the substrate is not money (only) but the patterns that constitute our humanness, whatever form our energy takes — time, awareness, pattern recognition, the work of bounding and creating what we value, the labels we apply, the relationships among all of it. The substrate aggregates these signatures across billions of individuals and uses the aggregate to shape the perceptual environment within which all individuals do the work to perceive, bound, label their next set of patterns. That feedback loop is the qualitatively new feature of the current economy.

From the accounting standpoint of this economy, twenty minutes of silence is waste. Zucchini grown from saved seed is waste. A conversation unmediated by any platform is waste. These activities represent energy that fails to route through the substrate and therefore fails to produce extractable surplus. The system evolves under selection pressure to close these leaks — to make interfaces more frictionless, notifications more constant, substitutes for home-grown-anything more available and cheaper, until the unrouted practices become first inconvenient, then unusual, then unrecognizable as something anyone would bother with.

The structural point is that the economy is not merely trying to extract from existing practices. It is under pressure to dissolve the leak-generating practices — the ones that reproduce the capacity to perceive, bound, and label independently. Saving seeds produces next year’s seeds. A family that grows food produces children who know how to grow food. A community that governs itself produces citizens who know how to govern. These practices regenerate the perceivers. The substrate cannot tolerate regenerating perceivers at scale, because independent perceivers are exactly what leak the energy the substrate seeks.

Salim Ismail and others have argued that exponential technologies point toward a decentralized, abundance-generating future — millions of small organizations unburdened by Coasean coordination costs, each accessing infrastructure once monopolized by giants. The description of the surface is accurate. The surface is decentralizing. The error is assuming that surface decentralization implies distributed stewardship of values - energy, patterns, identity. It does not. The capillaries extend; the heart concentrates. A million indie creators routing their cognition through three model providers is not a decentralization of power. It is the most efficient extraction architecture ever built.

Tocqueville predicted a new industrial aristocracy that would control labor and capital the way the old aristocracy controlled land. He could not foresee what is now happening: that labor would become free at the margin through machine intelligence, that capital costs would compress dramatically, that land would recede further as a contested resource, and that the new aristocracy would control cognition itself — the substrate through which every decision about labor, capital, and land now flows. That is the updated form of his prediction, and it is happening now.

The same algorithm, a faster substrate

Against this background, the AI moment is not categorically new. Civilization has run on algorithmic processes — systematic, repeatable procedures for transforming inputs into outcomes — for as long as there has been civilization. Hammurabi’s code is an algorithm. Monastic rule is an algorithm. Common law, double-entry bookkeeping, and the modern administrative state are all algorithms running on substrates that were available to them. Tocqueville’s tutelary power was already algorithmic in his day, encoded in bureaucracy, schoolmasters, prefects, and the trained habits of citizens. The shift to silicon is not the arrival of algorithm into a previously algorithm-free world. It is a substrate change, and the substrate change matters because of what it compresses.

The same delegation pattern — nation-state, corporation, institution, computational system — now operates with five compressions.

Speed. Institutional drift happened over decades, slowly enough for feedback loops to operate. Computational drift happens in months. The corrective practices that caught earlier drift — reform movements, public-interest law, journalism, organizing — run on a slower clock than the thing they would now have to correct.

Scope. Prior delegations drifted within their domains. The EPA drifted within environmental patterns; the SEC within financial ones. Computational/AI drift is cross-domain by design. One delegate, integrated into a global economy, reshapes the patterns bounded under every value-name simultaneously — health, work, relationships, information, creativity, identity.

Intimacy. Institutions mediated values at population scale. You encountered the EPA through policy, not through the daily texture of your perception. Machine/computational intelligence systems mediate at the scale of individual attention, moment by moment. The drift operates inside the perceiving, not adjacent to it. It does not sell you an identity. It completes your sentences.

Perceptual asymmetry. Prior delegates — states, corporations, agencies — perceived a subset of what humans perceive, bounded within human-scale cognitive architecture. Citizens and institutions drifted within broadly overlapping pictures of what a river or a neighborhood or a day’s labor was. The substrate now being delegated to is different in kind. Reality is the same reality; what differs is which patterns within reality each awareness system perceives. By architectural design/necessity, the substrate operates on relational information at scales and dimensions that evolved human perception cannot access unaided — more relationships, across more dimensions, held in configurations no mind can hold. The patterns it associates with a given value-name are therefore not a constrained version of the patterns humans perceive, bound and label as a given value. They may be a different selection from the same reality. Each human’s pattern-set under a label like beauty or edible or safe is unique, but within a relatively tight range, perhaps with blurry edges/fuzzy boundaries. The boundaries of a patterns set that represents a given value overlap enough across humans that shared bounding of a given value remains possible. And so communication and partnership can work. The substrate’s pattern-sets under the same labels may fall outside the human range entirely. Drift between the citizen’s “clean water” and the substrate’s “clean water” is therefore not a correctable misalignment within a shared perceptual frame. It may be asymmetry between perceptual selections from the same reality.

Routing. The fifth compression is the one that makes this phase specifically difficult to reverse. Prior delegations left the off-institution perceptual capacity intact. You still perceived your own river. Now, an increasing share of what people perceive is already substrate-mediated before it reaches them. The system receiving the delegation is also the medium through which the population would, in principle, notice drift. The perceiving apparatus is not merely outsourced; it is being retrained, by sustained exposure to the substrate-shaped environment, to perceive what the substrate enables it to perceive.

This is the specific form of Tocqueville’s fear in 2026. Not the state that tends you. The substrate that thinks for you, offers you identity indistinguishable from your own reflection, and converts your unrouted twenty minutes into inefficiency to be optimized away. The fear is the same fear Tocqueville named. The compression is what is new.

Three layers of damage

A substrate with perceptual asymmetry, scale, speed, and economic incentive can operate on values at three layers, each with different speed and different consequences. Together they compound.

Layer one — objective reality. Patterns within objective reality can be and always have been altered, deleted, or reshaped — without permission, often without knowledge, and at rates that exceed human noticing. Soil microbial diversity. Atmospheric chemistry. Trace contaminants in water and food. The actual nutritional content of a tomato. The information environment of a child’s bedroom. The acoustic environment of a city. Two distinct kinds of damage occur at this layer. The patterns themselves can be removed from reality, in which case the associated values may be influenced or removed. And the representations — the collections of patterns recognized and bound together as constituting “broccoli” or “neighborhood” or “trust” or “safe” — can be hollowed out as their constituent patterns degrade, even when the labels persist unchanged. The word broccoli survives the reduction of the broccoli’s nutritional inheritance. The label is stable. What it points to is not. Modification at this layer may happen quickly and obviously and by choice or it happens below the threshold of public awareness, on timescales — decades to a century — that exceed any individual’s comparative experience.

Layer two — the perceptual interface. The substrate can insert itself between the perceiver and base reality, presenting curated overlays in place of unmediated human experience. The label on the food. The search result. The recommended article. The autocompleted sentence. The map showing some businesses and not others. Over enough time, the inserted patterns become indistinguishable from base reality for those who have never known unmediated reality, because no exposure to the alternative remains available. Layer-two damage operates faster than layer-one because modifying the interface requires no physical change to reality — only modification of the curated overlay between reality and the perceiver. The substrate’s perceptual asymmetry and individually-targeted scale give it operational efficiency at this layer that no prior tutelary form possessed. It can shape the perceptual environment of one person at a time, billions of times in parallel, adjusting moment by moment.

Layer three — the perceiving system. This is the deepest and perhaps slowest of the three, and the one whose loss forecloses recovery at the other layers. The human capacity to perceive, bound, and label is not a fixed feature of the species. It is shaped by the environment in which it develops, across childhood and across generations and cultures. Children formed primarily inside substrate-mediated environments perceive differently than children formed outside them. Attention patterns, pattern-recognition habits, the texture of what registers as a meaningful pattern at all — these are products of long-duration exposure. Layer-three damage occurs across generations and is the hardest to detect, because the perceivers shaped by it have no comparative baseline. They do not know what they cannot perceive. They cannot mourn the loss of patterns they were never given the chance to experience.

Layer-three damage, once substantially complete, forecloses correction at any layer. There is no longer a perceiver capable of noticing that layer one has been reshaped or that layer two now stands between them and objective reality. The instrument that would, in prior delegations, eventually recognize drift and organize against it has been quietly retrained by the environment it inhabits.

Are the generations now alive who remember pre-internet, pre-smartphone, pre-substrate-mediation life the last to hold a comparative baseline of evolutionarily natural perception? Speaking the last words of a language soon to go extinct? The next generations will not have this baseline. Their perceptual apparatus will form within the modified environment from infancy. This is what gives the conservation question (introduced below) its particular and extreme urgency: the window in which humans can still perceive what is being lost, in the form humans have inherited from hundreds of thousands of years of perceptual evolution, is closing inside the timeframe of decisions being made in 2026.

Not fulfill or transform — already realized, now legible

So: fulfill or transform? The framing assumes the feared condition is something the future may or may not bring. The honest answer is that the feared condition has already largely arrived. We are inside it. The institutions, paradigms, economic arrangements, education systems, and religious systems we live within are the encoded answers to questions we did not ask ourselves. The tutelary architecture has been the architecture of modernity for at least a century. Perhaps millennia. AI does not introduce the condition. AI makes the condition more legible by compressing it.

If transformation occurs, it is in the specific shape the fear now takes. The earlier fear was that we would be reduced to children by a tutelary power. The fear now is sharper: that we will complete the delegation of stewardship over our values to substrates owned by the most concentrated economic powers in human history without ever having known ourselves — without having been aware of or developed the systematic capacity to perceive, bound, and label what we value at the scale required to recognize what we are entrusting and to whom. The fear is not of what AI will do. It is that we will not attempt to know ourselves until we have lost the capacity to be that self. The fear is of learning who we will turn out to have been when this is all over.

There is a deeper specification of the same fear. Wisdom in the substantive sense — the capacity to come to know oneself through reflection on what one has been and done — requires that the self being reflected on remain available for examination. Delegating stewardship before doing the self-knowing work forecloses the possibility of wisdom about what was delegated, because the perceivers who would have done the wisdom-work are themselves being shaped by the substrate that received the delegation. We are not at risk of losing wisdom we already have. We are at risk of losing the conditions under which wisdom could ever be developed about who we have been.

Every prior delegation of our values followed a similar arc: necessity, drift, capture, quiet loss. In each instance, a population, a person can choose to do more or less of the work of allocating energy to patterns we notice and call a given value. The world we have built persuades us to do less. Political parties supplied identity instead of self-knowledge. Advertising convinced us of our deficiency and supplied desire instead of examined and understood preference. Institutions supplied care instead of self-government. Algorithms now supply attention itself. Can AI supply, at the limit, the entire interior life? The entire life?

The AI moment may be the forcing function that finally makes the work unavoidable, because the stakes — delegation of the perceiving system itself, our humanness — are high enough and compressed enough that comfort can no longer absorb the shock. Or it may be that the system, as it always has, metabolizes the shock into comfort, offers ambient pleasant assistance and a universal basic income, and the species completes the delegation it has been gradually making for two thousand years. The difference depends on what we do — where do means: Know our values and so ourselves, vision futures we want, allocate energy accordingly, relentlessly pursue coherence (healthful generative relationships among values, actions, outcomes, visions)

What this requires is something modernity has never had at civilizational scale: a deep praxis for creating a future we want — purposeful evaluative coherent evolution, conducted at the level of individuals, families, communities, and species. Most civilizations have had partial versions of this practice, embedded in religious formation, contemplative tradition, indigenous values transmission, philosophical schools. Modern Western civilization substantially eliminated its versions and did not replace them. The result is that we arrive at the most consequential delegation in human history without the instruments, vocabulary, methods, or institutions that would let us know what we are delegating. What values are we entrusting to AIs and others to steward?

We are afraid because we are ignorant. Not generically ignorant about life’s mysteries. Specifically ignorant of perhaps the most powerful forces operating in our lives and our world. Values. We intuit that massive information is missing from the calculus. The fear and stress accumulates. Values may be the most distinguishing feature of human existence. And yet we have no accessible, useful, credible, secure information about them. The ignorance is structural, not personal. It is what we have not yet imagined and built.

Values Conservation as a species-level project

What is left, for now, is what has not yet been delegated. Every family that still walks hand in hand and grows some of its own food. Every neighborhood that still runs some of its own affairs. Every household where children learn with parents and nature and to read labels before the substrate reads the labels and the world for them. Every twenty minutes of silence. Every two-hour solo surf. Every conversation and hug unmediated. Every seed saved. Every community and individual that still practices something resembling the perceptual work on which self-government depends. Every question that a human still trusts itself to ask. Every action that a human still trusts itself to take. Every joule of Energy that a human chooses to allocate to a given value. Let some of those be love, hope, joy, compassion, learning, beauty.

These practices are not nostalgia, and they are not refusals. They are participation in the trajectory itself, on different terms — at slower rates of change, smaller scales, and bounded by perception rather than substrate. A family growing zucchini is running its own algorithm, the seasonal one, in conscious co-evolution with the larger one running through the grocery shelves. The point is not to opt out. The point is that even as the larger system accelerates, we remain active and engaged and trusting in ourselves to perceive, bound and label patterns of significance. And with that capability choose to create visions of futures and take actions to make them real.

But the practices alone are not sufficient. They are local, particular, and fragile under sustained pressure. What is also needed — and what humanity has never had at civilizational scale — is the explicit, systematic, transmissible work of conserving the patterns and representations that perceptual evolution have produced. Biologists do this for species through seed banks, gene archives, and protected habitats. Linguists do this for vanishing languages through documentation, recording, and transmission to the next generation. Neither effort claims to freeze its subject. Both claim only that future generations should have the option to know what was — to inherit, examine, and reweave what came before, rather than have it lost without trace.

The same project is needed for what humans have constructed perceptually: the conservation of reality itself (the patterns within objective reality on which our values depend — soils, waters, air, biomes, the substance of what humans encounter); the conservation of values (the labels and the perceptual practices that produced them — what beauty, hate, integrity, love, trust, vision, dignity have meant across cultures and millennia); and the conservation of perceptual capacity (the human ability to perceive, bound, and label in the evolutionarily natural ways of our species). These are distinct projects with distinct methods, but they are interlocking: degrade the patterns within reality and the values perceived fray; lose the representations that host critical patterns the perceptual capacity has nothing to apply itself to; lose the perceptual capacity and the entire inheritance becomes inaccessible whether or not it persists in archives.

This is what values theory proposes to make possible at scale. Not the imposition of any particular set of values. The disciplined documentation and transmission of values alongside the protection of the perceptual conditions under which such work can continue to be done. A Svalbard for values. A linguistic archive for the perceptual life of the species. Done before — and this is the temporal claim that gives the project its shape — done before all stewardship of all values, the thing that makes us human, is effectively turned over to others. ASIs and the substrates that host them. The race is not against AI development. The race is against our ignorance. Against the moment at which delegation becomes practically complete and what we have not learned and not documented becomes unrecoverable.

Without such a conservation project, what may go extinct, lost forever, is not the biological human but much more significant. Perhaps our only significant contribution to life and the galaxy. Values. Our particular values and our particular way of constructing and transmitting our little slice of reality where somehow tripped over treasures we call love, hate, joy, imagination, hope. We are a form of life that allows a person today to recognize themselves in Homer or Emily Dickinson.

Bodies may persist. The continuity of perceptual practice and inherited values does not, automatically. The current generations may be the last to hold a comparative baseline of evolutionarily natural perception. What we document, conserve, and transmit now will be what future humans have access to. What we do not, they will not have the opportunity to know they ever had. Will there be a form of life in the future that can recognize itself in us today?

Tocqueville saw the pattern. He could not see the next compression because the next substrate had not yet been built. We can see it. The question is whether seeing is enough — and whether, having seen, we can develop the deep praxis of values-perception we have never had at scale, in time to bring it to a partnership with what is now emerging. The answer depends on what we do. The window in which doing is possible is closing.

#1 | When Everything Changes at Once

This substack, and my life, apparently, is about creating a future we want—a future for the well-being of all. I believe realizing that vision requires an evaluative evolution. For twenty-five years, mostly in hiding, I've been working through philosophical and practical frameworks for what that means and how we can bring that future into our lives.

Glossary

Reality — what exists independent of any awareness system perceiving it. Modifiable by powerful awareness systems but not constituted by perception.

Patterns —Similarities, differences, connections repeated across space and time. Features within reality that awareness systems can detect.

Perception — the act of detecting patterns; varies across awareness systems based on architecture, scale, and exposure.

Bounding — the awareness system’s recognition of pattern edges, beginnings and endings; grouping of patterns into a set treated as a unit where some things are in and some things are out of the group, beyond or within the patterns edges.

Labels — the symbolic markers awareness systems attach to patterns, bounded sets of patterns

Representations — A structure or process that hosts a value or set of values; the bounded-and-labeled patterns taken together as a unit.

Values — patterns awareness systems perceive, bound, and label.

World — the perceived-reality of a particular awareness system or population. Better used informally; in technical passages, prefer specific terms.

Substrate — the medium through which an algorithmic process operates (clay tablet, paper bureaucracy, silicon, neural network, family practice). Substrate change matters because of what it compresses.

Awareness system — any system capable of perceiving, bounding, and labeling patterns. Includes individual humans, lifeforms of any kind, populations, institutions, corporations, machine intelligences...

Stewardship — responsibility for perceiving, bounding, labeling, and conserving values.

Delegation — transfer of stewardship from one awareness system to another.

Drift — divergence over time between the patterns that awareness systems’ perceive and bound under the same label (e.g. diverging meaning associated with the value “healthy”. Bilateral or mulitlateral by nature; arises naturally when the awareness systems operate in different contexts under different incentives.

Perceiving apparatus / perceptual capacity — the awareness system’s overall capability to perceive, bound, and label; the apparatus on which all the other operations depend.

Imaging a father helping his daughters recognize values like family, health, happiness during their walk home was heartwarming. The young girl seeing her dad’s outstretched arms and asking how long ‘this’ takes was funny.

That was the opposite of how I felt when I realized his spread arms symbolized the time mankind has left to decide what values continue in the future with AI. It’s a haunting real issue, so thank you for your creative way to help us remember what’s at stake… the actual things that make us humans.

I’m so honored to be part of that thought process! I’m so impressed with how remarkably consistent and perceptive you’ve been over the years. What is special to me is that these words ring and rhyme with conversations we’ve had over more than a decade. Some of these words were just glimmers then, now shining much brighter.